You can finish a shell in 18 months and still wait years for the first usable megawatt. For AI infrastructure teams in 2026, that is the hard truth behind many delayed launches.

The main risk is not land, fiber, or even GPUs. It is power timing. If your plan assumes that announced capacity, contracted capacity, and energized capacity are the same thing, the schedule will break long before the cluster goes live.

Why datacenter grid connection delays are worse in 2026

AI load growth has changed the shape of demand. A normal enterprise site might need a few megawatts. An AI training campus may ask for 50 MW, 100 MW, or far more in one step. Some projects now target campuses above 1 GW, which pushes well past the spare room many local systems were built to handle.

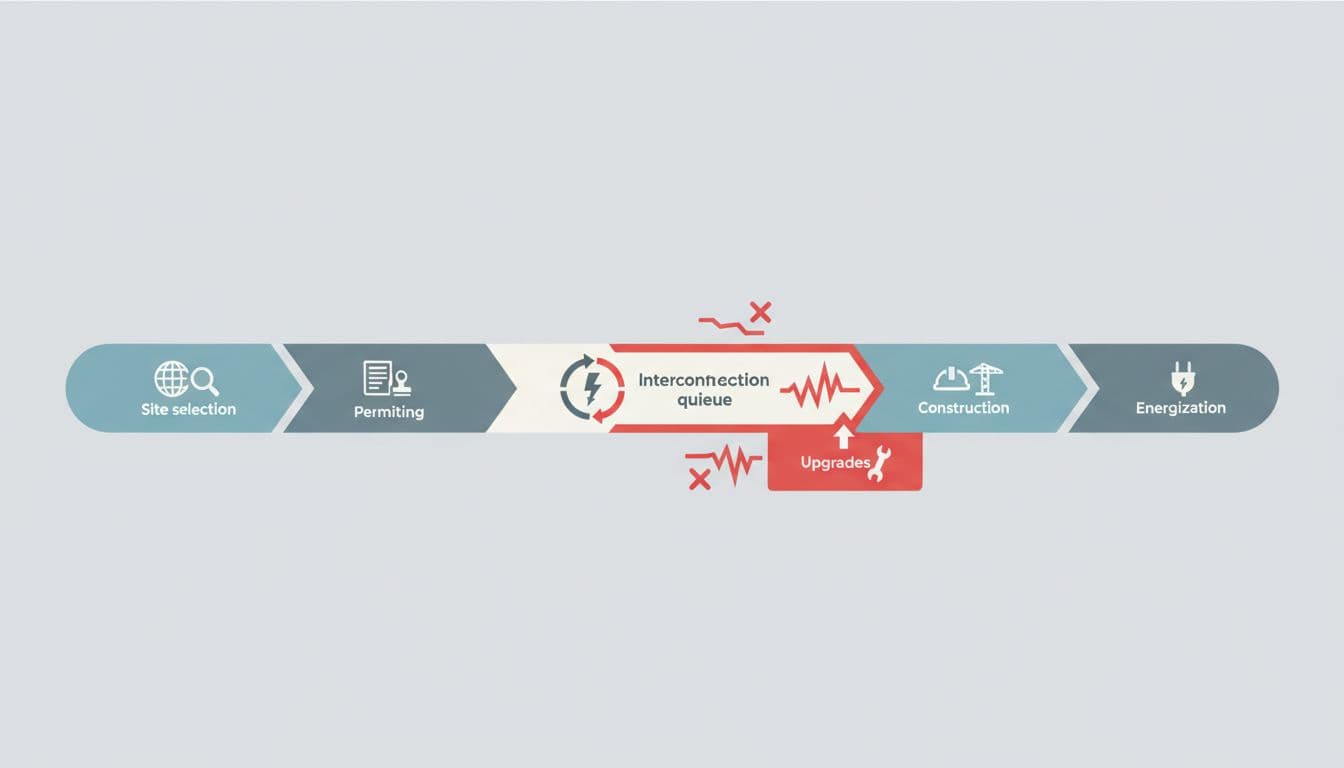

That creates the first delay. Utilities do not connect a large load by checking a box. They study the feeder, the substation, the upstream transmission path, and the effect on nearby customers. As RMI’s March 2026 analysis explains, queue backlogs are now a direct barrier to large new loads, not only to generators.

A second delay comes from the grid beyond the site fence. Your parcel may sit next to a substation, yet the real limit may be miles away on a transmission line or a transformer bank. That is why utilities often say a project has “available service” at first glance, then later attach network upgrades, long study windows, or phased energization.

Equipment bottlenecks make the timeline worse. Power transformers, GIS, switchgear, breakers, and relays still carry long lead times. If a substation upgrade needs gear that arrives in 20 to 36 months, the interconnection date moves with it. Construction crews cannot install equipment that does not exist.

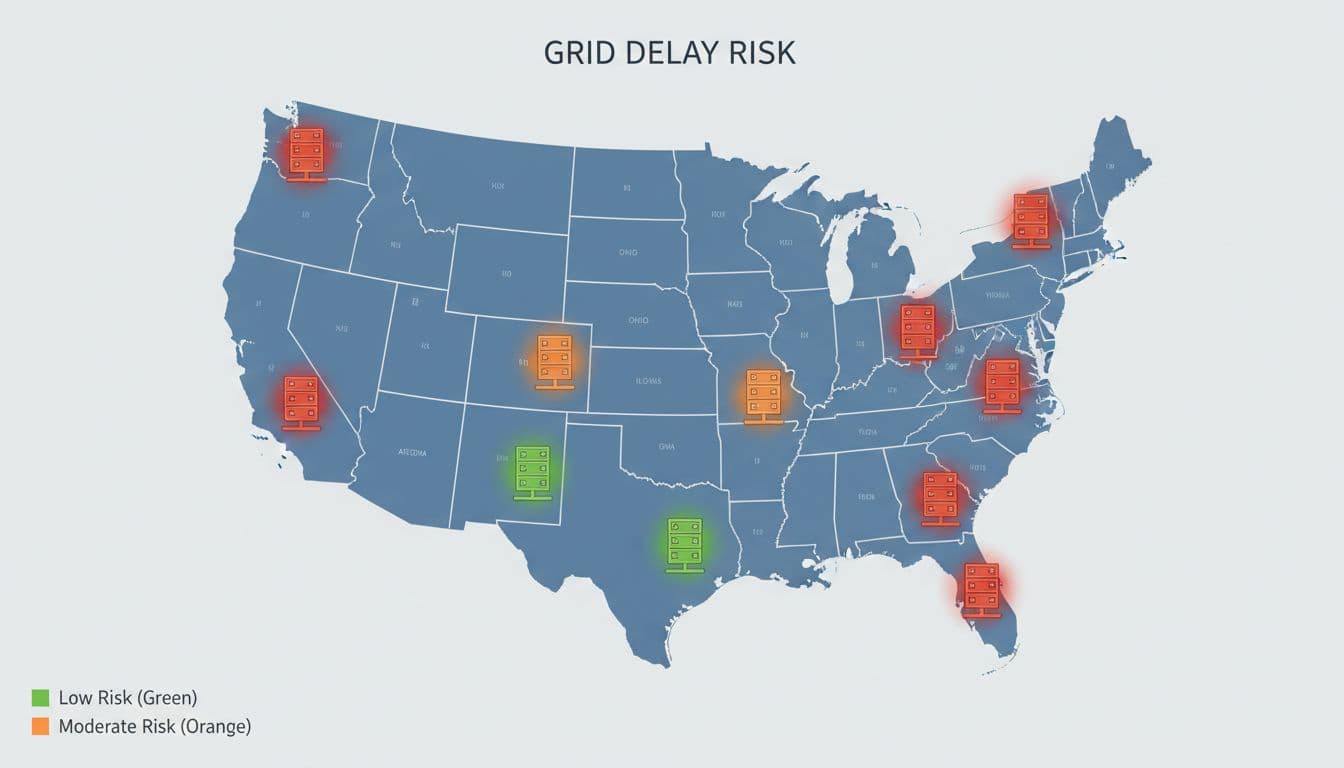

Recent Network World reporting described utilities quoting four to ten years for new large-load service in some markets, with one study period stretching to 12 years. At the same time, Data Center Frontier’s review of transmission constraints shows why local substation work often is not enough. The transmission system itself is lagging AI demand.

A queue position is a claim ticket, not a live power outlet.

Why power timelines reshape AI cluster deployment

Capacity planners need three separate definitions on every project. Mixing them together is one of the costliest mistakes in AI site planning.

This quick table keeps the terms straight:

| Capacity type | What it means | Why it matters |

|---|---|---|

| Near-term capacity | Power available soon, often with little or no major upgrade work | Best signal for the first hall or first cluster |

| Contracted capacity | Megawatts promised in a lease, utility letter, or development agreement | Useful for planning, but dates can slip |

| Energized capacity | Power that is physically live, tested, and ready for load | The only capacity that can run GPUs |

The takeaway is simple. Energized capacity is what deploys compute. Everything else is a forecast.

Consider a 120 MW AI cluster. The site team may secure a lease that promises 80 MW by late 2027 and full buildout later. Meanwhile, the utility may only support 16 MW near term, pending a new transformer and network upgrade. If procurement orders GPU racks for the full 80 MW window, the project can end up with idle equipment, staggered commissioning, and a revenue gap that no contract language fixes.

This is also where colocation selection gets harder. A provider may advertise “reserved power” or “future expansion capacity.” Those phrases can hide very different realities. Ask whether the power is already energized, whether it depends on a utility study still in process, and whether the seller controls the delivery schedule or only hopes for it. The Build guide to interconnection queues is useful here because it frames headroom, upgrade risk, and queue status as separate variables.

Phased builds help, but only if the phases are real. A sensible plan might launch 8 MW or 16 MW first, then add pods as power arrives. That lowers schedule risk and brings early revenue. Still, phase one should stand on its own economics. Too many plans assume phase two power will follow on time, then discover that the second tranche depends on transmission work outside the campus.

If the utility cannot name a credible energization path, you do not have deployable AI capacity.

A planning framework that reduces schedule risk

The best response is not optimism. It is portfolio discipline. Treat power like a supply chain with hard dates, single points of failure, and staged delivery.

A workable screening model usually includes four checks:

- Verify near-term, contracted, and energized capacity separately, then tie each number to a date and dependency.

- Ask for the utility one-line path, required upgrades, study stage, and the long-lead equipment list.

- Build a phased deployment plan that matches actual energization milestones, not lease language.

- Keep at least two geographic options alive until power dates harden.

Geographic diversification matters more in 2026 than it did two years ago. Northern Virginia and parts of PJM still matter because ecosystems, fiber, and labor are hard to match. Yet those same strengths attract large queues. Texas can move faster in the right pocket, but substation and transmission timing still decides outcomes. Midwestern and SPP-adjacent markets may show better near-term headroom, though some sites carry hidden network-upgrade exposure. The goal is not to find a perfect market. It is to avoid betting the whole AI rollout on one interconnection path.

Onsite generation can help, but planners should treat it as a bridge, not magic. Gas turbines, engines, or fuel cells may cover a short gap or support a hybrid design. They also bring fuel delivery, permits, emissions limits, and reliability tradeoffs. Use them when the math works and the gap is short. Do not use them to paper over a weak grid strategy.

Power dates now set AI deployment dates. Teams that plan against energized capacity, keep phased options real, and spread exposure across more than one market will place compute sooner and miss fewer launch windows.