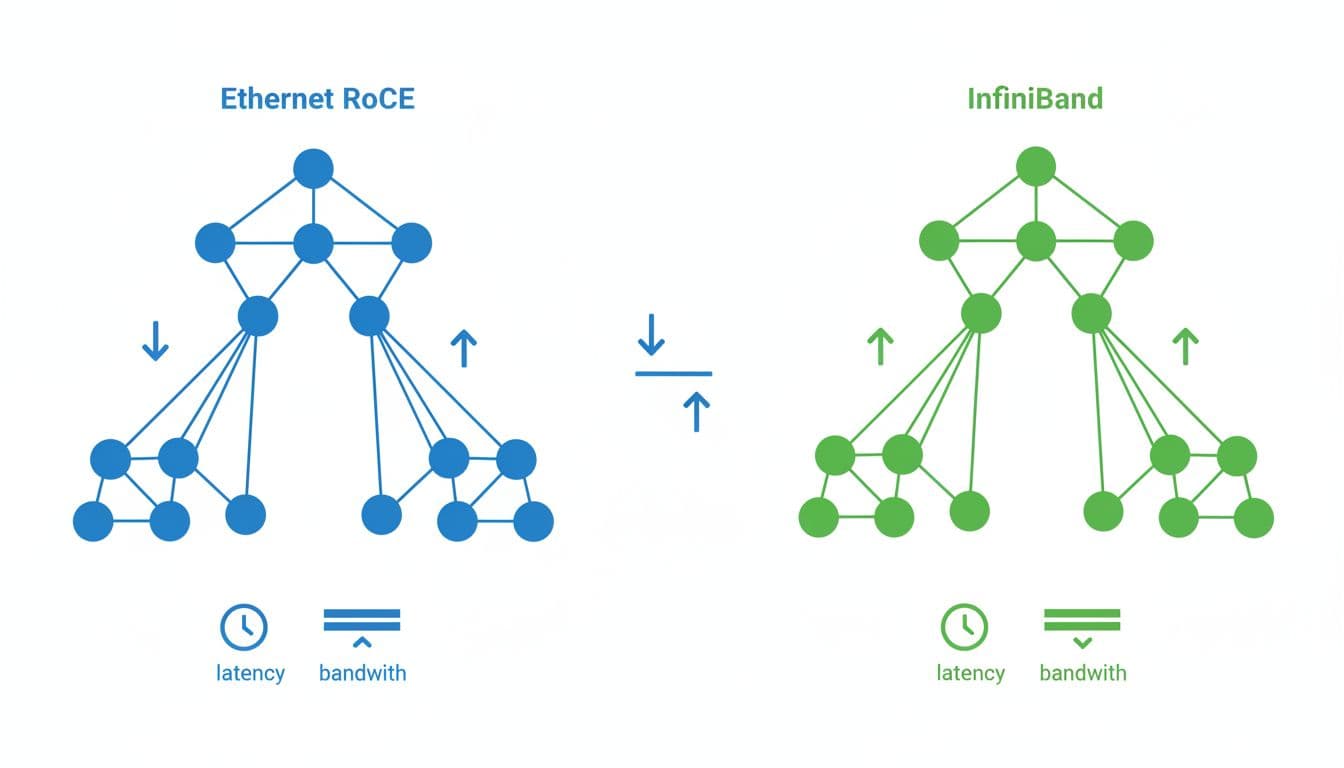

By 2026, the network fabric can decide whether expensive GPUs stay busy or sit idle during every training step. In the Ethernet versus InfiniBand debate, there isn’t a universal winner anymore.

Ethernet has moved from “good enough” to a serious default for many AI clusters. InfiniBand still leads when you need the lowest latency, tighter jitter control, and cleaner behavior at very large scale. The right answer depends on cluster size, workload shape, NCCL traffic patterns, and how much tuning your team can own.

What changed for AI fabrics in 2026

Both camps now live in the same speed class. AI clusters ship with 400G links today, while 800G Ethernet and InfiniBand XDR are now part of active build plans. On paper, that sounds like parity. In practice, AI training cares less about raw port speed than about congestion behavior during synchronized bursts.

By 2026, Ethernet is the mainstream choice for many new scale-out GPU builds because teams already know how to run IP fabrics, vendor choice is broader, and costs are lower. At the same time, InfiniBand keeps a real edge in native transport behavior and low, stable latency. Recent GPU network design coverage reflects the same split: Ethernet keeps widening its footprint, while InfiniBand stays strong in the most demanding training environments.

That matters because AI jobs create bursty east-west traffic. All-reduce, all-gather, and expert-parallel all-to-all patterns can hammer a fabric in ways a simple throughput test won’t show. A network that looks fast in isolation can still waste GPU time if latency spikes under incast or if queueing gets messy.

Training efficiency, not port speed, decides the winner

For distributed training, the useful metric is not just bandwidth. It’s step-time efficiency. Every extra microsecond in collective operations can multiply across thousands of GPUs and millions of iterations.

InfiniBand still has the cleaner profile here. Typical latency is lower, and more important, tail latency is usually tighter under load. Well-tuned RoCEv2 over Ethernet can come close, often landing in the 85 to 95 percent range of InfiniBand training throughput for mid-sized clusters. That gap is small for many teams, but it isn’t trivial when training runs last weeks. A recent RoCE vs InfiniBand analysis makes the same point for 256 to 1,024 GPU deployments.

The gap widens as communication takes a larger share of each step. Dense data-parallel training on 64 or 128 GPUs may barely notice. Large pretraining runs with tensor parallelism, pipeline parallelism, or MoE traffic will notice much more. If your jobs spend a large chunk of time in collectives, InfiniBand’s lower jitter can translate into better GPU utilization and shorter wall-clock training.

For fine-tuning, inference, RAG pipelines, and mixed-use enterprise clusters, Ethernet often wins anyway. Those environments usually value flexibility, multi-tenancy, and cost more than the last few percent of training speed.

Lossless Ethernet is the hard part

RoCEv2 is attractive because it keeps RDMA semantics on standard Ethernet. Still, Ethernet does not become lossless because you bought faster switches. You need PFC, ECN, and end-host congestion response such as DCQCN tuned as one system. You also need the right queue mapping, enough buffer headroom, and consistent policy across NICs, top-of-rack switches, spines, and any routed boundary.

RoCE problems usually come from configuration drift and congestion tuning, not from lack of port speed.

That is why RoCE deployments can disappoint at scale. A mismatch in PFC or ECN settings can cause drops, pause storms, or head-of-line blocking. A solid lossless Ethernet design guide walks through those failure modes in detail.

InfiniBand avoids much of this pain because credit-based flow control is native. That reduces operational guesswork and makes performance more repeatable. However, the tradeoff is a narrower ecosystem, tighter dependence on NVIDIA hardware, and a separate skills track. If your network team already runs large BGP and ECMP fabrics, Ethernet may still be easier overall, even if the tuning bar is higher for RoCE.

A practical decision matrix for 2026

Operations often decide this purchase before microbenchmarks do. Ethernet fits shared data centers, multi-vendor sourcing, and teams that already manage large IP fabrics. InfiniBand makes more sense when the cluster is dedicated to large training jobs and every hour saved has real business value.

This quick matrix is a better starting point than any vendor slide:

| Scenario | Better fit | Why |

|---|---|---|

| Up to 256 GPUs, fine-tuning or inference-heavy | Ethernet with RoCEv2 | Lower cost, easier ops, performance gap is usually small |

| 256 to 2,048 GPUs, mixed training workloads | Usually Ethernet | Strong economics, broad vendor choice, good enough if RoCE is tuned well |

| 512 to 4,096 GPUs, communication-heavy training | Depends on traces | Benchmark your actual jobs; InfiniBand gains value as collectives dominate |

| 2,048+ GPUs, dedicated frontier-style pretraining | InfiniBand | Lower latency and steadier tail behavior improve scaling efficiency |

| Shared or multi-tenant AI cloud | Ethernet | Routed IP fabric, easier integration, better fit for mixed services |

A few rules hold up well in 2026. If your team lacks deep RoCE experience, budget time for fabric validation before buying at scale. If your jobs are mostly data-parallel and communication-light, Ethernet is hard to beat on total cost. If communication consumes a big share of step time, the InfiniBand premium can pay for itself through shorter training runs and better GPU occupancy.

Conclusion

The Ethernet versus InfiniBand choice is no longer a simple speed-versus-cost argument. Ethernet is now a strong, often better option for many AI clusters, especially when operational fit and budget matter as much as raw performance.

InfiniBand still earns its place where training efficiency depends on the lowest possible latency and the most stable behavior under heavy collective traffic. The safest decision starts with workload traces, cluster scale, and the team’s operating model, not with the number printed on the switch.