OpenTelemetry is free. Your observability bill isn’t. In high-volume Kubernetes clusters, the hard part isn’t turning telemetry on. It’s stopping useful data from becoming an expensive flood.

Most teams see the pain after rollout. The collector looks cheap, then ingestion, index growth, retention, egress, and query load start piling up. Add the hours spent tuning pipelines, and the real OpenTelemetry cost shows up fast.

Once volume passes a certain point, small design choices become budget problems.

The collector is cheap, the pipeline is not

A lot of cost discussions stop at collector CPU and memory. That’s only the first line item. The bigger spend usually sits downstream in backend ingestion, storage, hot indexing, query scans, network egress, and engineering time.

OpenTelemetry itself is not the expensive part. The expensive part is everything you choose to emit, move, store, and keep searchable.

Current 2026 benchmarks for large Kubernetes estates often land around $4,100 to $6,000+ per month. In one published example, collector compute was about $280, storage was $1,156, network was $130, and engineer time was $2,500. For a grounded model, see this cost breakdown at scale.

This is where Kubernetes changes the math. Pod churn increases time series count. Autoscaling changes label sets. Service meshes amplify spans. Short-lived jobs generate bursts that look small in isolation but large in aggregate.

A simple way to frame the spend is this:

| Cost layer | What drives it in Kubernetes |

|---|---|

| Collector compute | Batching, retries, queues, tail sampling state |

| Backend ingest and index | Per-GB logs, active metric series, trace writes |

| Query and egress | Cross-zone traffic, dashboard fan-out, ad hoc searches |

| Team overhead | Pipeline tuning, schema cleanup, noisy incident triage |

Backend pricing makes this sharper. Some platforms charge on ingest, others on active series, index size, or query volume. In a noisy cluster, all of those can rise together.

The patterns that make telemetry bills spike

High spend usually comes from a few repeat mistakes, not from OpenTelemetry itself.

High-cardinality labels are a common one. If app metrics include pod_uid, container_id, session_id, or raw URL paths, your series count can explode. A single counter becomes thousands of active series, and every dashboard query gets heavier.

Verbose logs are another fast path to overspend. Teams often ship full request bodies, large JSON payloads, repeated stack traces, and every successful health check. That data is costly to ingest, index, and search, even when nobody reads it.

Trace volume gets out of hand in similar ways. If you trace 100% of requests for chatty internal services, or every mesh hop, spans multiply fast. Tail latency debugging gets easier, but storage and query bills climb with it.

Duplicate pipelines hurt more than many teams expect. A service may export OTLP directly while Prometheus also scrapes it. Logs may flow through Fluent Bit and an OTel collector at the same time. Auto-instrumentation and manual SDK code can overlap too. You pay twice for the same story.

Short-lived workloads add another edge case. CronJobs, CI runners, and batch pods generate setup logs, startup metrics, and short traces that often have low long-term value. In busy clusters, over-collecting these workloads creates a steady background tax.

Collector sizing also matters. Undersized collectors back up, retry, and drop under load. Oversized DaemonSets waste node memory across the fleet. If you need a reference for right-sizing, this guide on right-sizing pods with OpenTelemetry data is a useful starting point.

Practical controls that reduce cost without blinding your team

The goal isn’t less visibility. The goal is better signal per dollar.

For traces, sampling is still the strongest lever, but the tradeoff matters. Head sampling is cheap and predictable because it decides early. However, it can drop the rare slow trace you wanted later. Tail sampling keeps errors and outliers, but it needs collector memory and state. In high-volume clusters, a common pattern is light head sampling at the edge, then selective tail sampling at a gateway for a small set of critical services. This cost reduction guide covers that tradeoff well.

For metrics, cardinality control has to be deliberate. Keep stable dimensions such as service, region, and environment. Drop labels tied to instance churn unless you truly query them. Review histogram buckets, because wide bucket sets increase storage and query cost. If exemplars don’t help your workflows, don’t keep them everywhere.

Logs need the same discipline. Filter low-value events before export. Redact or drop large payload fields. Keep short retention for noisy access logs, and longer retention for security, audit, or error streams. Route debug logs to cheaper storage if you need them for a short window. Batching and compression also help, because they lower exporter overhead and network spend.

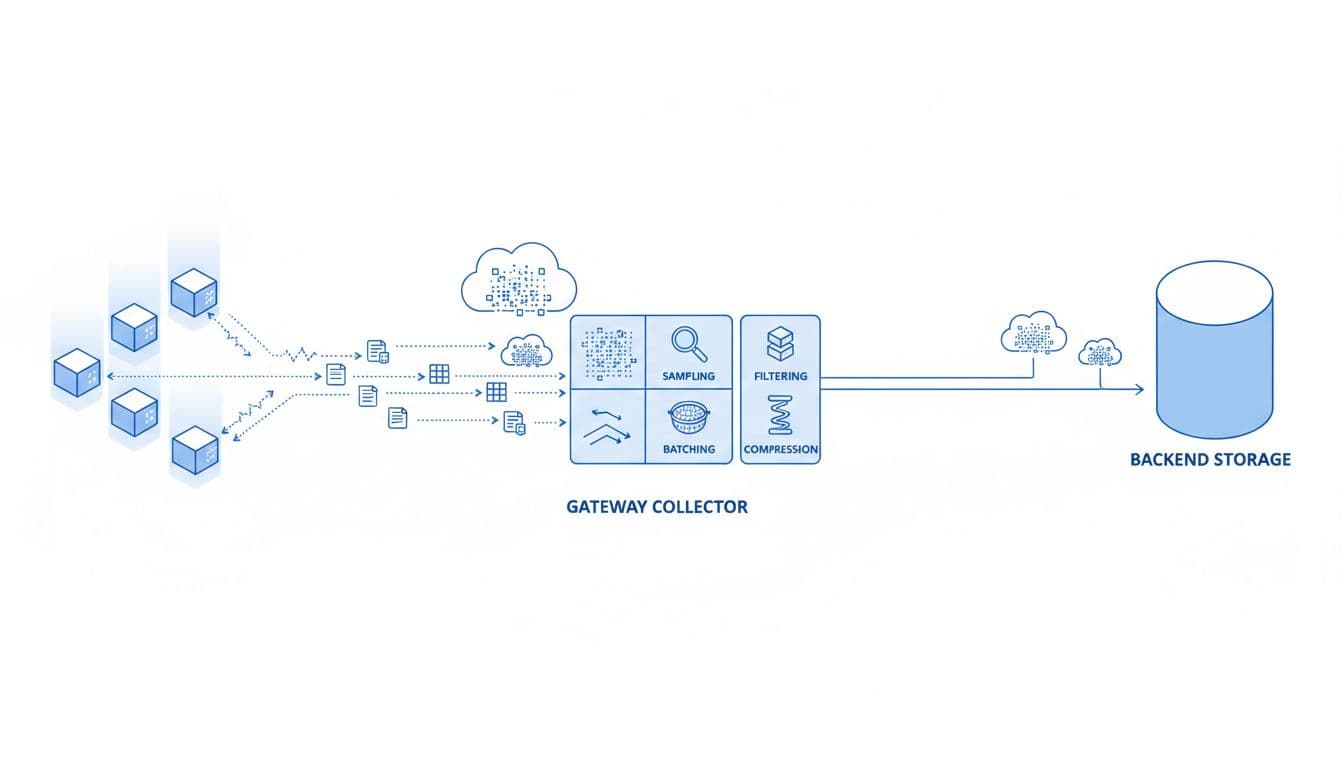

Routing matters as well. A gateway collector can apply filtering, sampling, and tenant-aware routing once, instead of repeating the same work in every pod. A two-tier design is often more efficient than sidecar-heavy deployment patterns, as shown in this Kubernetes cost optimization example.

The last control is governance. Set attribute allowlists. Review telemetry schema in pull requests. Give teams service-level data budgets. Then publish a monthly report that shows the top spenders by service, label set, and log source.

Conclusion

High-volume Kubernetes clusters don’t get expensive because OpenTelemetry has a license fee. They get expensive when telemetry moves through the stack without limits.

The safest way to control cost is to treat instrumentation, collectors, backend design, and team time as one system. In practice, selective collection beats maximum collection. It keeps observability useful when the cluster, and the bill, start to scale.